Author:

(1) Katrin Fischer, Annenberg School for Communication at the University of Southern California, Los Angeles (Email: katrinfi@usc.edu);

(2) Donggyu Kim, Annenberg School for Communication at the University of Southern California, Los Angeles (Email: donggyuk@usc.edu);

(3) Joo-Wha Hong, Marshall School of Business at the University of Southern California, Los Angeles (Email: joowhaho@marshall.usc.edu).

Table of Links

Abstract Introduction & Related Work

II. METHOD

An online survey was disseminated through Amazon Mechanical Turk. A total of 239 participants (86 females, 153 males) passed the attention check and completed all questionnaires. Empirical estimates of sample sizes needed for 0.8 power in mediation analysis confirm that this sample size is adequate to detect small to medium effects when using Hayes’ PROCESS version 3+ utilizing percentile bootstrap confidence intervals as the default [20].

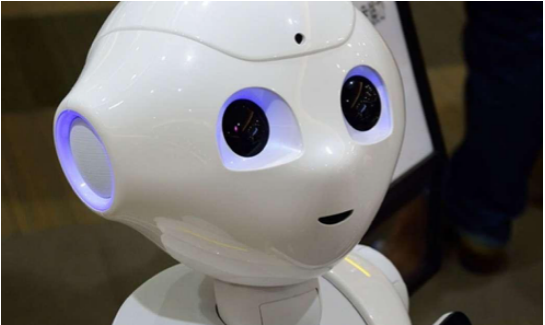

After consenting to participate in the study, participants were exposed to an image and textual description of the social robot Pepper and filled out demographic questions regarding their age (M = 35.15), gender (86 female, 153 male), education (M = 4.75 years of college/university) and familiarity with robots (M = 3.56 on a 7-point Likert scale) as well as warmth and competence questions, UTAUT assessments and trust and trustworthiness questionnaires. All responses were collected on 7-point Likert scales.

The stereotype content model [9] was used to measure first impressions as it has been successfully applied to human perceptions of social robots [11]. The warmth and competence items utilized the statement “As viewed by society, how ... are social robots?”. The warmth dimension contained four items: tolerant, warm, good natured, and sincere (α = 0.74). Competence was comprised of five items: Competent, confident, independent, competitive, and intelligent (α = 0.78). The UTAUT construct behavioral intention [18] consisted of three items (example: “I intend to use the robot”, α = 0.71). We utilized a measure of trust that considered its relationship with trustworthiness and provided assessments of robot ability, benevolence and integrity [7]. Trust was thereby measured directly via 10 questions (α = 0.84), of which one example item was “I would rely on the robot without hesitation”. Trustworthiness was measured via the dimensions of ability (six items, example: “I feel very confident about the robot’s skills”, α = 0.80), benevolence (five items, example: “the needs and desires of others are very important to the robot”, α = 0.82) and integrity (five items, example: “sound principles seem to guide the robot’s behavior”, α = 0.80). An aggregate of these three subscales produced the trustworthiness index (α = 0.85).

This paper is available on arxiv under CC 4.0 license.