Authors:

(1) Arindam Mitra;

(2) Luciano Del Corro, work done while at Microsoft;

(3) Shweti Mahajan, work done while at Microsoft;

(4) Andres Codas, denote equal contributions;

(5) Clarisse Simoes, denote equal contributions;

(6) Sahaj Agarwal;

(7) Xuxi Chen, work done while at Microsoft;;

(8) Anastasia Razdaibiedina, work done while at Microsoft;

(9) Erik Jones, work done while at Microsoft;

(10) Kriti Aggarwal, work done while at Microsoft;

(11) Hamid Palangi;

(12) Guoqing Zheng;

(13) Corby Rosset;

(14) Hamed Khanpour;

(15) Ahmed Awadall.

Table of Links

Teaching Orca 2 to be a Cautious Reasoner

B. BigBench-Hard Subtask Metrics

C. Evaluation of Grounding in Abstractive Summarization

F. Illustrative Example from Evaluation Benchmarks and Corresponding Model Outpu

3 Teaching Orca 2 to be a Cautious Reasoner

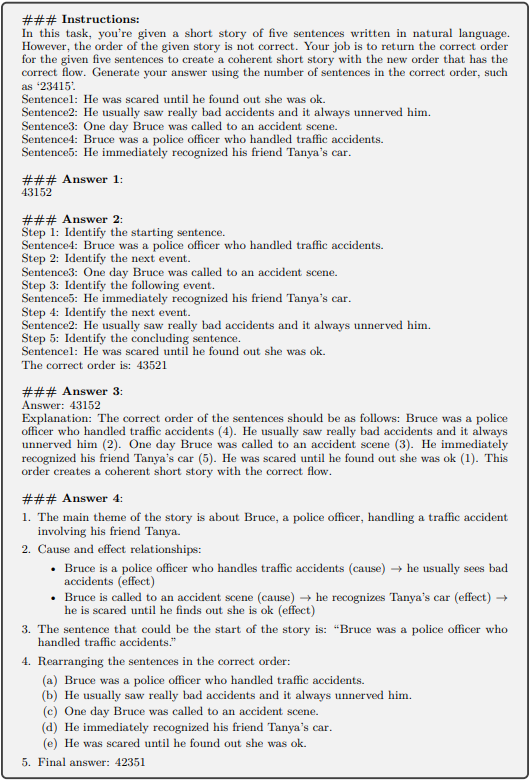

The key to Explanation Tuning is the extraction of answers with detailed explanations from LLMs based on system instructions. However, not every combination of system instruction cross tasks is appropriate, and in fact, the response quality can vary significantly based on the strategy described in the system instruction.

Even very powerful models like GPT-4 are susceptible to this variation. Consider, Figure 3, which shows four different answers from GPT-4 obtained with four different system instructions given a question of story reordering. The first answer (the default GPT-4 answer) is wrong. The second answer (using a chain-of-thought prompt) is better. We can see that the model is reasoning with step-by-step but important details guiding the decision process are still missing. The third answer (with an explain-your-answer prompt) is wrong but the explanation is correct. The final answer is the only correct answer and is obtained using the following system instruction:

We note that GPT-4’s response is significantly influenced by the given system instructions. Secondly, when carefully crafted, the instructions can substantially improve the quality and accuracy of GPT-4’s answers. Lastly, without such instructions, GPT-4 may struggle to recognize a challenging problem and might generate a direct answer without engaging in careful thinking. Motivated by these observations, we conclude that the strategy an LLM uses to reason about a task should depend on the task itself.

Even if all the answers provided were correct, the question remains: Which is the best answer for training a smaller model? This question is central to our work, and we argue that smaller models should be taught to select the most effective solution strategy based on the problem at hand. It is important to note that: (1) the optimal strategy might vary depending on the task and (2) the optimal strategy for a smaller model may differ from that of a more powerful one. For instance, while a model like GPT-4 may easily generate a direct answer, a smaller model might lack this capability and require a different approach, such as thinking step-by-step. Therefore, naively teaching a smaller model to “imitate” the reasoning behavior of a more powerful one may be sub-optimal. Although training smaller models towards step-by-step-explained answers has proven beneficial, training them on a plurality of strategies enables more flexibility to choose which is better suited to the task.

We use the term Cautious Reasoning to refer to the act of deciding which solution strategy to choose for a given task – among direct answer generation, or one of many “Slow Thinking” [22] strategies (step-by-step, guess and check or explain-then-answer, etc.).

The following illustrates the process of training a Cautious Reasoning LLM:

-

Start with a collection of diverse tasks

-

Guided by the performance of Orca, decide which tasks require which solution strategy (e.g. direct-answer, step-by-step, explain-then-answer, etc.)

-

Write task-specific system instruction(s) corresponding to the chosen strategy in order to obtain teacher responses for each task.

-

Prompt Erasing: At training time, replace the student’s system instruction with a generic one vacated of details of how to approach the task.

Note that step 3 has a broad mandate to obtain the teacher’s responses: it can utilize multiple calls, very detailed instructions, etc.

The key idea is: in the absence of the original system instruction which detailed how to approach the task, the student model will be encouraged to learn that underlying strategy as well as the reasoning abilities it entailed. We call this technique Prompt Erasing as it removes the structure under which the teacher framed its reasoning. Armed with this technique, we present Orca 2, a cautious reasoner.

This paper is available on arxiv under CC 4.0 license.